It’s a strange working world for Amazon employees. An algorithm monitors their performance, and if they don’t deliver, bots send out notices. That’s how it works when Amazon terminates its employees.

Admittedly: Being fired is not pleasant. First and foremost, of course, that goes for the employees. But the HR department rarely enjoys it either. But receiving a notice of termination via bot is certainly several levels stranger than an unpleasant termination interview.

But that’s exactly how many employees at Amazon in the U.S. are feeling. According to a report by Bloomberg, in which the magazine spoke with 15 drivers of the Flex delivery program, many reported how they suddenly received an automated e-mail with a termination notice.

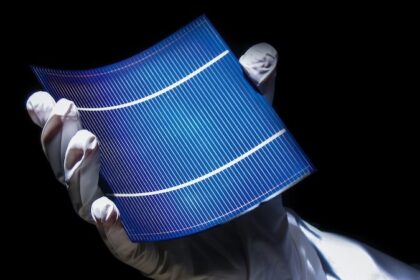

Algorithm measures performance

Apparently, Amazon prefers to terminate its flex employees via bot.

Anyone who has followed Jeff Bezos’ HR strategy over the years won’t be too surprised by this. The Amazon CEO has always believed that machines can do (many) jobs better than humans. Starting with human resources.

For example, former employees repeatedly report on technologies at Amazon that measure productivity. That’s apparently true for the freelance delivery drivers Amazon’s Flex program, too. There’s nothing secret about it. Drivers know up front that their work is being monitored via the Flex app.

There is a kind of comparison between what the algorithm specifies and the completed performance. For example, the app calculates in advance how long a particular delivery should take. GPS data plays a role in this. Information on the traffic situation and travel time is also used.

What happens if the algorithm makes a mistake?

The algorithm is mostly accurate, but it is not perfect. For example, one delivery driver told Bloomberg how he was once asked to drop off a delivery in the early morning hours at a gated apartment complex.

There were no staff at the gate that early, and the driver was unable to deliver the package to the appropriate address. Flex protocol in this case is to contact the complex’s main office. The driver did so. However, this was also still closed.

In this case, Amazon prescribes to contact the customer directly. Now you can imagine how “easy” it is for suppliers to reach someone early in the morning – especially when calling from an unknown number.

Bad ratings from the algorithm

So the driver couldn’t reach anyone and then had to call Amazon’s Flex support to resolve the issue. Again, that took some time.

But since that’s a problem the algorithm doesn’t include in its calculations, the technology simply saw that the delivery was extremely late for no reason, and the driver got a bad rating.

A short time later, he received an automated email: Amazon terminated him via mail bot.

Amazon terminates, complaints futile

He did complain. But he did not get a chance to explain his situation. Talks with the management are rare for Flex-Driver. Why should they? The algorithm is probably right 95 percent of the time. And if internal sources at Amazon are to be believed, the small error rate is accepted.

First, it is much more efficient and cost-saving to replace the HR department with technology. But if one were to start questioning the algorithm every time, this would involve too much effort and cost – especially with a small error rate.

Second, Amazon also has no problem finding new employees. The Flex program has always been popular, but the pandemic has made it even more so. Because many people lost their jobs or felt insecure at work, a flexible job at Amazon with good pay is tempting.

Third, however, Amazon has little to fear in its approach. That’s because Amazon’s practices are not illegal. Nor do flex employees have virtually no legal basis for complaint, since as self-employed workers they are hardly protected by labor laws.

Amazon just one example of many

It’s not just Amazon, by the way. Other tech companies in the U.S. are also taking advantage of the gig economy. So do the drivers at Uber and Doordash, and the juicers who charge electric scooters for companies like Lime and Bird.

There are tentative legislative initiatives, such as the Algorithm Fairness Act, to crack down on personnel decisions by algorithm in particular. But these initiatives have not yet gone beyond initial proposals.