While the U.S. Capitol was put in a state of emergency for several hours yesterday (Wednesday) due to an attack by violent Trump supporters, some streamers tried to make money from the riots. Here’s how Facebook, YouTube and Twitch responded to the live videos.

An angry and violent mob stormed the U.S. Capitol yesterday (Wednesday) and caused chaos for hours. The U.S. House of Representatives had just begun counting the Electoral College votes to confirm Joe Biden as the new U.S. president.

While the mob stormed the Capitol, the events could be followed in real time via livestream on numerous social media channels. For Facebook, YouTube & Co., this also meant that they had to decide in real time whether, how and in what form they wanted to allow these livestreams.

Livestreams incite violence

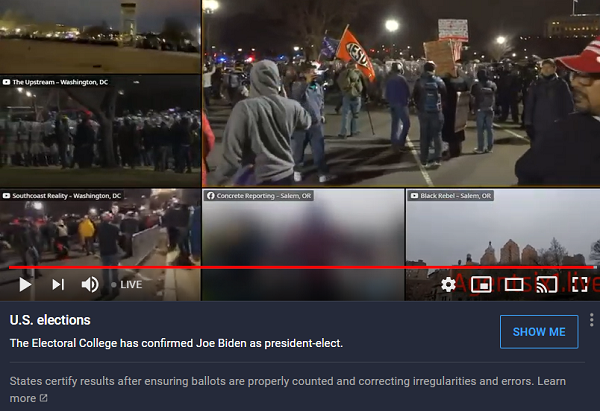

The livestreams mostly showed the events from the perspective of the rioters and could be easily found using keywords such as “Stop the Steal.”

The phrase refers to the flawed assumption that Joe Biden and the Democratic Party in the U.S. “stole” the election from President Donald Trump. An assumption that, while not tenable, has been repeated several times by Trump and arguably led to the riots in the Capitol.

However, the streamers did not just broadcast the events at the Capitol. Most of them wanted to monetize their videos with pleas for donations and pointers to crowdfunding sites or PayPal accounts.

In the meantime, the comment columns under the videos became heated and in some cases there were calls for further violence.

YouTube changes algorithm for Capitol videos

YouTube made a corresponding effort to place videos from reputable news sources at the top of the feed as quickly as possible instead. The platform also reportedly changed its algorithm so that rioters’ videos could no longer be found under relevant search terms.

However, that only worked moderately. A very simple search by BASIC thinking showed that you can still get to the relevant livestreams very quickly.

As long as YouTube classifies the videos in a news context, these users are still allowed to stream live. However, YouTube has deleted the comments and turned off the comment function under these videos.

Instead, there is now a reference to the fact that the Electoral College has confirmed Joe Biden as the next US president.

At the same time, they are working with a team of moderators to stop monetization, as YouTube’s terms of service prohibit the monetization of videos that glorify or incite violence.

Twitch: only reports from afar

Live videos on the Capitol events were also very popular very quickly on Twitch. However, there were hardly any livestreams directly from the scene on this platform. This is reported by The Verge magazine. Most users commented on the events from home, while referring to videos from news outlets like CNN.

A few twitchers were on the scene, but reported from afar and appeared rather astonished and shocked.

However, there may well have been other live reports on Twitch. However, these are more difficult to find because videos are not found using keywords or hashtags, but by category.

If problematic videos are subsequently found, it can be assumed that the platform will remove them immediately, as it has done in the past. For example, Donald Trump’s account was temporarily blocked on Twitch.

Facebook declares emergency

It was much easier to find videos of the events on Instagram and Facebook at first. Here, too, many livestreamers were intent on further stirring up violence and aggression.

Instagram, however, at some point blocked relevant hashtags like #StopTheSteal from showing new posts. Users can now only see the top posts.

Facebook showed the livestreams relatively bluntly, but again began deleting the videos or making them harder to find as rioters fought their way into the Capitol.

At the same time, Facebook also had to decide how to handle a new video Donald Trump posted around 4 p.m. Washington time Wednesday. In it, he did ask protesters to go home, but then again said that they “stole” the election from him.

Facebook initially tried to add various warnings to the video, but eventually deleted it and declared an emergency.

Twitter also initially blocked the comment function under the video and the possibility to retweet. In the meantime, however, Twitter has also taken down the video. YouTube also deleted the video relatively quickly and also some other tweets from Donald Trump.

Twitter’s security department then declared that Trump’s account would be blocked for twelve hours until Trump removed the tweets himself. Otherwise, the account would remain blocked. Twitter gave “gross violation of our civil integrity standings” as the reason.

Apparently, all social media platforms here agreed that Trump’s video and posts incited violence rather than appeased tempers.

Platforms need clear rules

The Capitol case is certainly an extreme event. But the case also shows that such situations can probably happen more often than one would expect and often arise out of nowhere – often further fueled by social media.

But then the platforms must be able to react very quickly. Accordingly, the platforms must define clear procedures for themselves and the moderator teams in advance so that they can intervene quickly and decisively in such exceptional cases.

Otherwise, there is a risk that the situations, instigated by social media, will then escalate in real life as well.